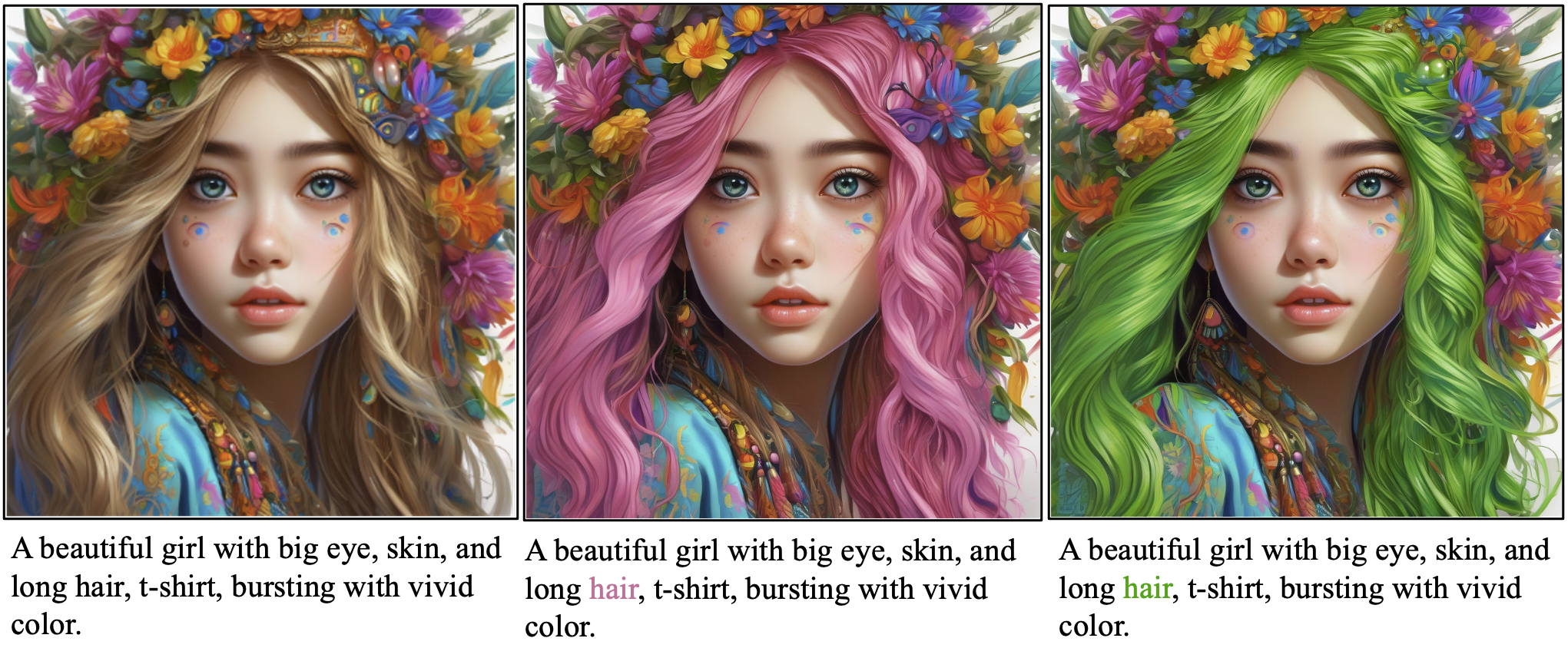

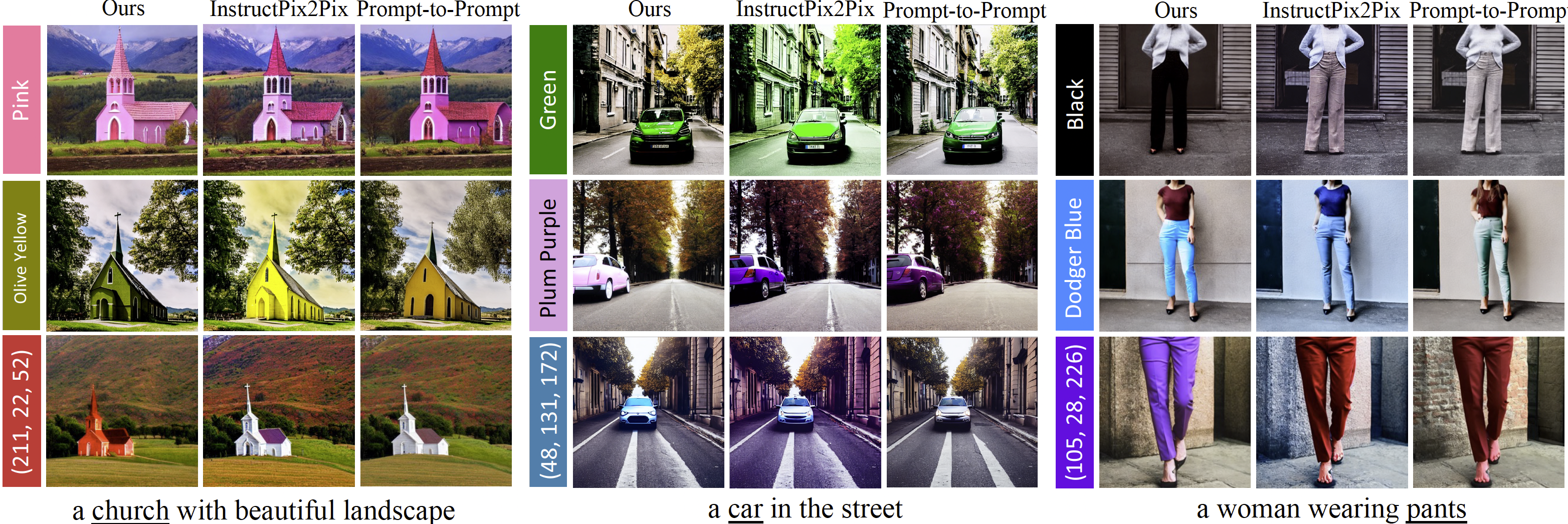

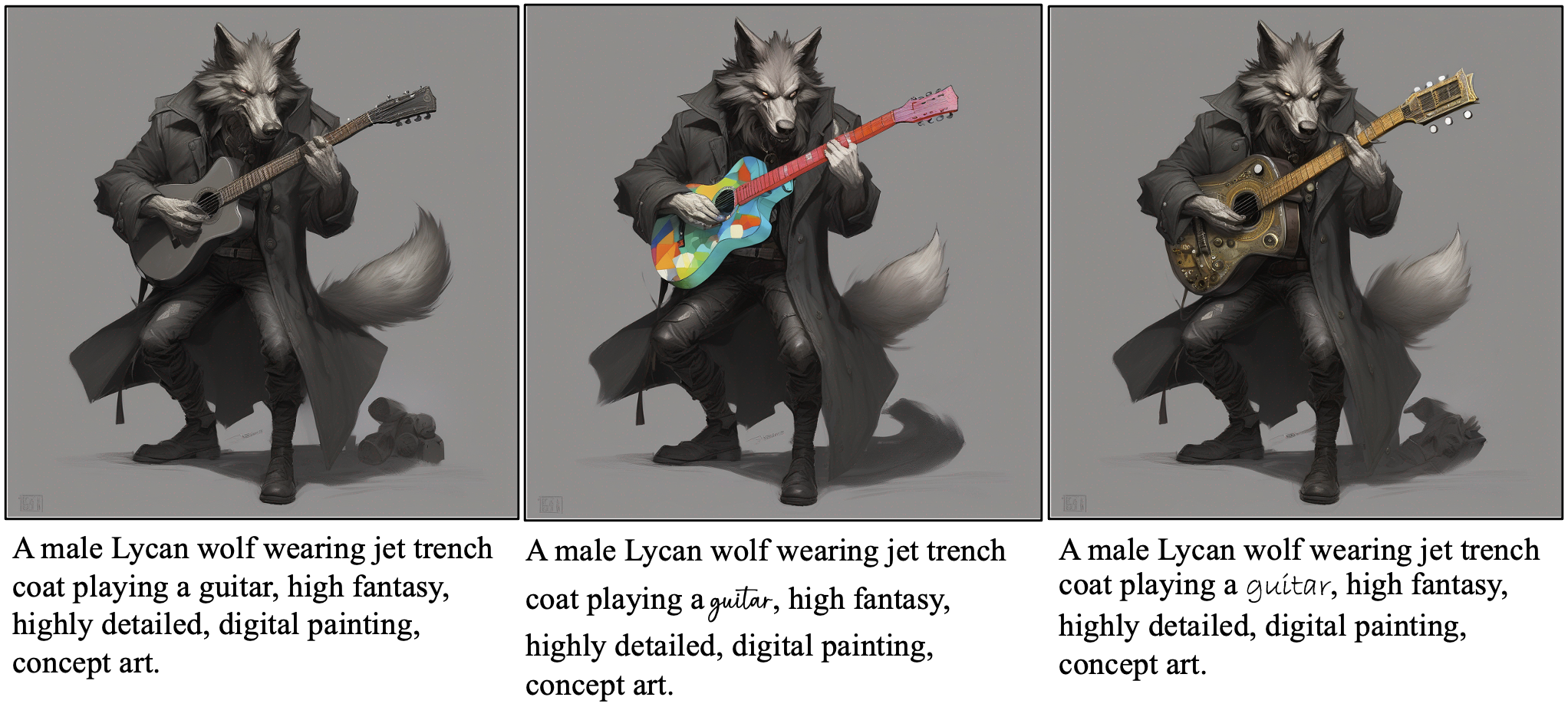

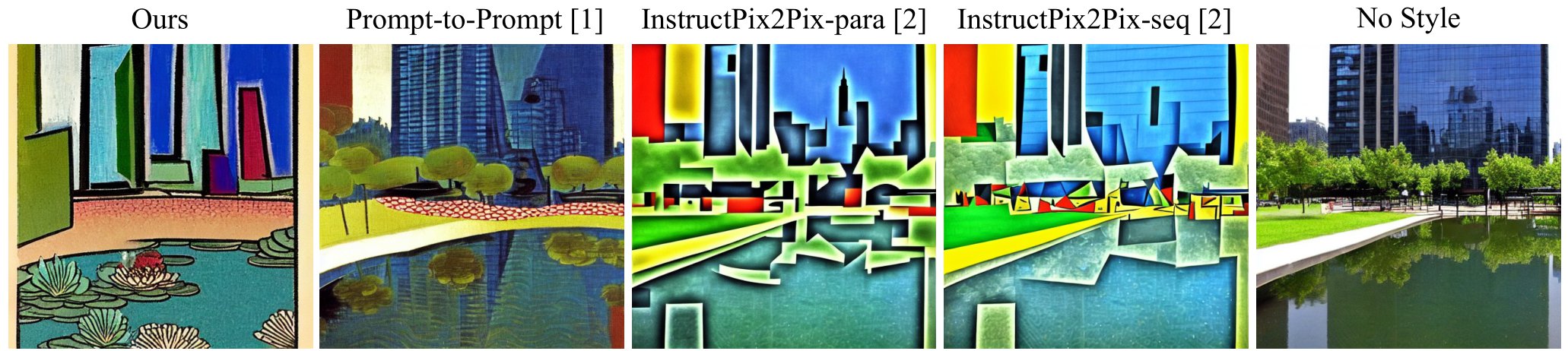

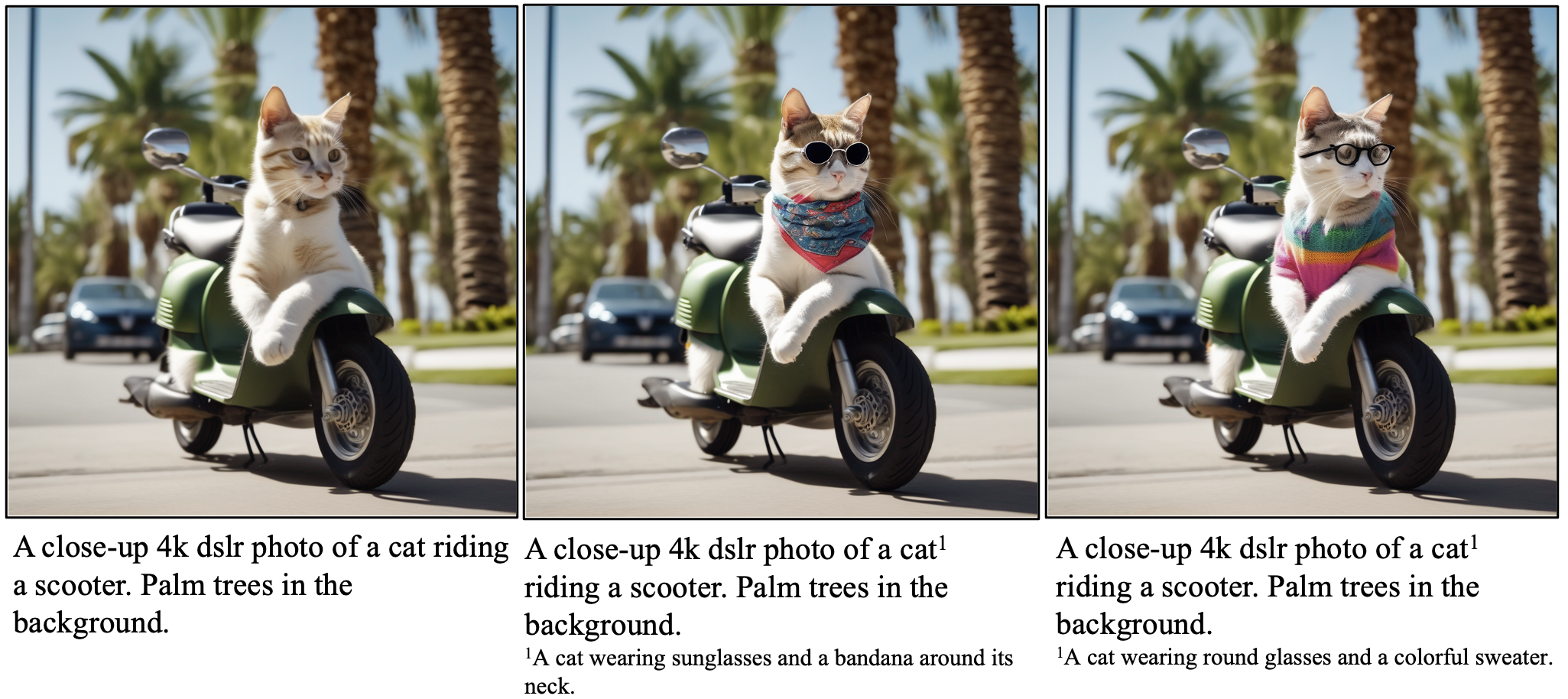

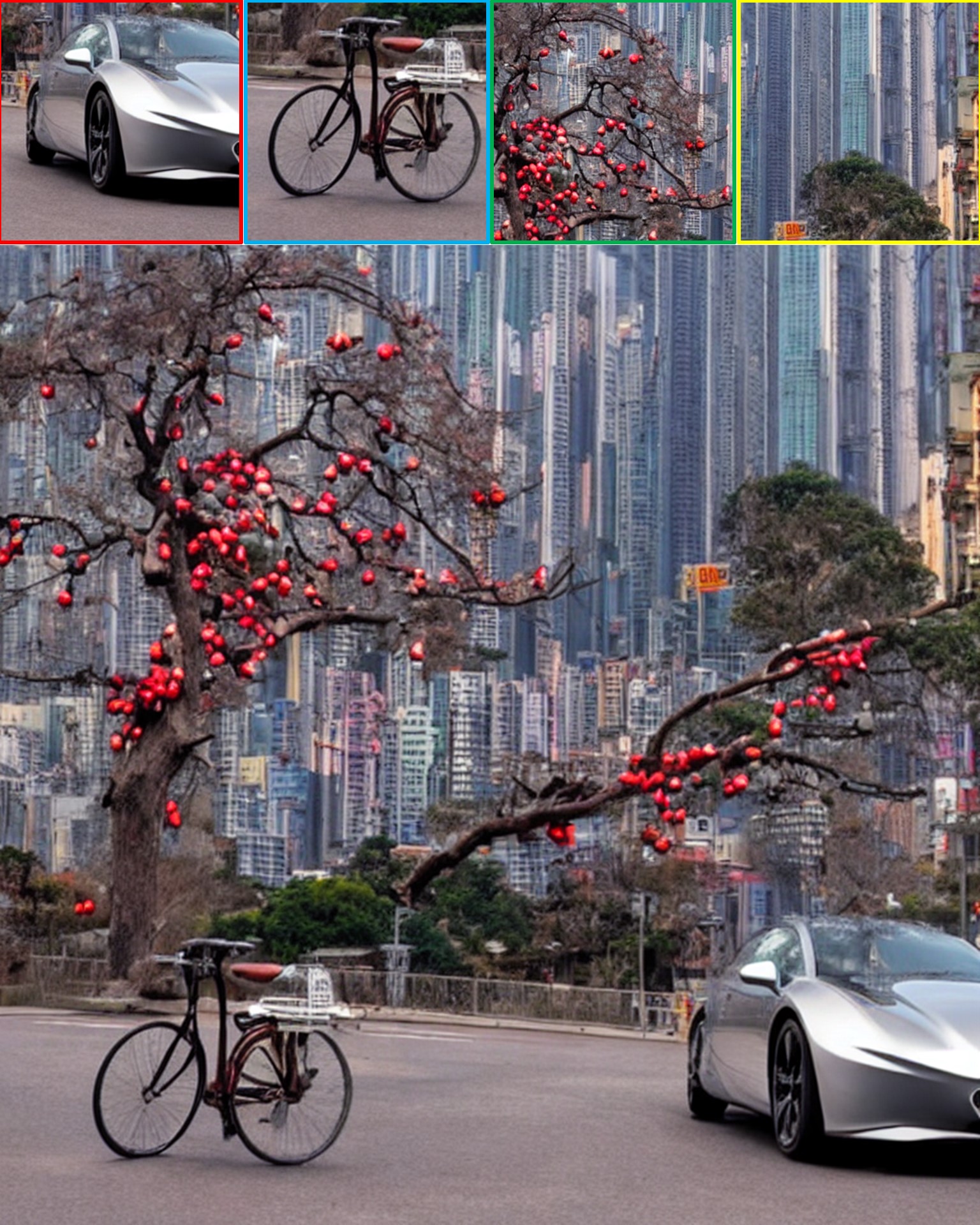

We explore using versatile format information from rich text, such as font size, color, style, and footnote, for text-to-image generation and editing. Our framework enables various controlibility, including intuitive local style control, precise color generation, and supplementary description for long prompts. Check out our paper for more applications.

A pizza with mushrooms, pepperonis, and pineapples on the top.

A Gothic church in the sunset with a beautiful landscape in the background.

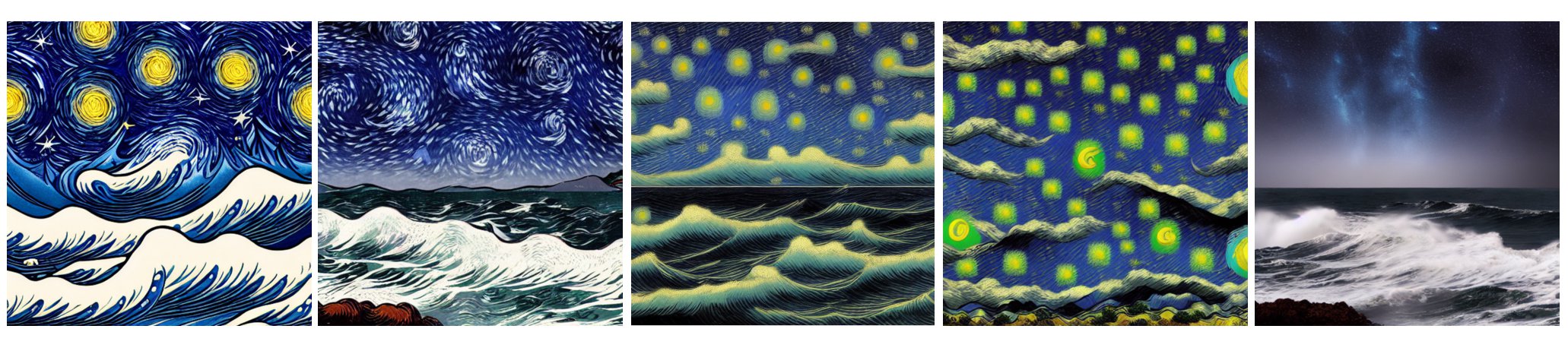

A night sky filled with stars above a turbulent sea with giant waves.

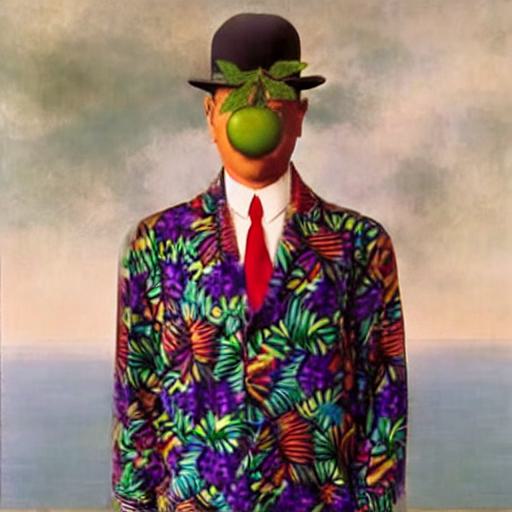

Styles: Van Gogh, Ukiyo-e

A close-up photo of a corgi wearing a hat1, beach and ocean in the background.

Styles: Impressionism.

1A lady's hat.

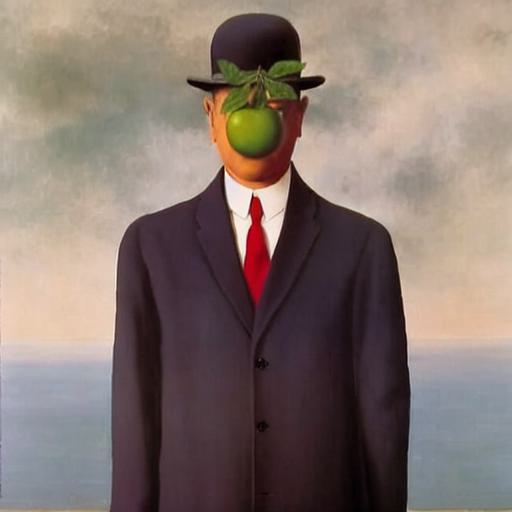

A man in suit1 with a green apple on his face..

1A colorful Hawaiian shirt.

A dog playing guitar on a boat, sailing in the ocean.

A girl with long hair sitting in a cafe, by a table with coffee1 on it, best quality, ultra detailed, dynamic pose.

1Ceramic coffee cup with intricate design, a dance of earthy browns and delicate gold accents. The dark, velvety latte is in it.

A pixel art of a duck with a gun1 in hand, wearing a hat2, minimalist, flat

1A bouquet of flowers.

2A black hat decorated with a red flower.

A watercolor painting of the detective duck wearing a sheriff uniform1 and holding a vintage handgun2.

1A dark green, washed jacket.

2A beautiful flower bouquet made of pink roses.

A kid wearing a backpack riding a bike in a street with fallen leaves.

A panda1 standing on a cliff by a waterfall.

1Happy kung fu panda, asian art, ultra detailede.

A portrait of a man with a golden beard wearing a hat.

Style: Cubism